import time

import numpy as np

import matplotlib.pyplot as plt

n = 1000

val = 5.4Debugging & Profiling

Time efficiency

Time/computation: usage of %timeit

References:

magic commands are IPython commands such as: %timeit, %matplotlib, %autoreload. They work only in interactive cases (Ipython, Jupyter Notebook, Jupyter lab, etc.).

Let us compare the time to create a vector of size n and fill it with the value val with various methods, using the `

print('Using empty and fill:')

%timeit a = np.empty(n); a.fill(val)

# Alternative: uncomment below

# from IPython import get_ipython

# get_ipython().run_line_magic('timeit', 'a = np.empty(n); a.fill(val)')Using empty and fill:

700 ns ± 17.7 ns per loop (mean ± std. dev. of 7 runs, 1,000,000 loops each)print('Using empty and list syntax:')

%timeit a = np.empty(n); a[:] = valUsing empty and list syntax:

886 ns ± 2.58 ns per loop (mean ± std. dev. of 7 runs, 1,000,000 loops each)print('Using full:')

%timeit a = np.full((n,), val)Using full:

1.47 µs ± 9.6 ns per loop (mean ± std. dev. of 7 runs, 1,000,000 loops each)print('Using ones:')

%timeit a = np.ones(n) * valUsing ones:

3.06 µs ± 69.1 ns per loop (mean ± std. dev. of 7 runs, 100,000 loops each)print('Using repeat:')

%timeit a = np.repeat(val, n)Using repeat:

2.5 µs ± 23.3 ns per loop (mean ± std. dev. of 7 runs, 100,000 loops each)Alternatives for time measurement

Another classical way to time a chunk of code is to use the time module, as follows:

import time

start = time.time()

a = np.ones(n) * val

end = time.time()

print(f"Execution time: {end - start:.5f} s.")Execution time: 0.00010 s.Memory efficiency

memory_profiler

You can run a memory profiler with the following magic command (other alternatives are available but require more coding and exporting the reports):

%load_ext memory_profilerFor illustration we compare a way to perform a distance matrix computation between two vectors, using a double loop or a vectorized approach.

n1 = 100

n2 = 100

x = np.random.randn(n1)

y = np.random.randn(n2)%%file mprun_demo.py

import numpy as np

def inv_distance(x, y):

n1 = len(x)

n2 = len(y)

dist = np.empty((n1, n2))

for i in range(n1):

for j in range(n2):

dist[i, j] = 1.0 / np.sqrt((x[i] - y[j]) ** 2)

return dist

def inv_distance_vect(x, y):

n1 = len(x)

n2 = len(y)

return 1.0 / np.sqrt((x.reshape((n1, 1)) - y.reshape((1, n2))) ** 2)Overwriting mprun_demo.pyWe can time the execution of the two functions:

from mprun_demo import inv_distance, inv_distance_vect

%timeit inv_distance(x, y)

%timeit inv_distance_vect(x, y)10.5 ms ± 97.5 µs per loop (mean ± std. dev. of 7 runs, 100 loops each)

33.2 µs ± 266 ns per loop (mean ± std. dev. of 7 runs, 10,000 loops each)The conclusion is simple: try to avoid loops in Python when performing matrix computation, and use vectorization and broadcasting as much as possible (or use numba or cython).

Some memory profiling can be performed with the memory_profiler package to investigate any difference in the memory footprint:

%mprun -f inv_distance inv_distance(x, y)%mprun -T mprun0 -f inv_distance_vect inv_distance_vect(x, y)

print(open('mprun0', 'r').read())

*** Profile printout saved to text file mprun0.

Filename: /home/bcharlier/enseignement/HAB796B9/2023-24/HAB796B9/Courses/ProfilingDebugging/mprun_demo.py

Line # Mem usage Increment Occurrences Line Contents

=============================================================

14 154.5 MiB 154.5 MiB 1 def inv_distance_vect(x, y):

15 154.5 MiB 0.0 MiB 1 n1 = len(x)

16 154.5 MiB 0.0 MiB 1 n2 = len(y)

17 154.6 MiB 0.1 MiB 1 return 1.0 / np.sqrt((x.reshape((n1, 1)) - y.reshape((1, n2))) ** 2)In this case, the difference is almost negligible concerning memory, only the time computation was significantly different between the two approaches.

Profiling

A profile is a set of statistics that describes the time taken by various parts of a program.

cProfile

The cProfile module provides deterministic profiling of Python programs. A profile is a set of statistics describing how often and for how long various parts of the program run.

Let us illustrate the profiling for some of the previous examples.

import cProfile

cProfile.run('a = np.empty(n); a.fill(val)','fill.prof')cProfile.run('a = np.ones(n) * val','ones.prof')cProfile.run('inv_distance_vect(x, y)', 'inv_distance_vect.prof')line_profiler

You can use inline magic commands to profile a function line by line.

%load_ext line_profiler%lprun -f inv_distance_vect inv_distance_vect(x, y)or

%lprun -T lprof0 -f inv_distance inv_distance(x, y)

print(open('lprof0', 'r').read())

*** Profile printout saved to text file 'lprof0'.

Timer unit: 1e-09 s

Total time: 0.0163658 s

File: /home/bcharlier/enseignement/HAB796B9/2023-24/HAB796B9/Courses/ProfilingDebugging/mprun_demo.py

Function: inv_distance at line 4

Line # Hits Time Per Hit % Time Line Contents

==============================================================

4 def inv_distance(x, y):

5 1 1348.0 1348.0 0.0 n1 = len(x)

6 1 386.0 386.0 0.0 n2 = len(y)

7 1 5811.0 5811.0 0.0 dist = np.empty((n1, n2))

8 101 18375.0 181.9 0.1 for i in range(n1):

9 10100 2172427.0 215.1 13.3 for j in range(n2):

10 10000 14167141.0 1416.7 86.6 dist[i, j] = 1.0 / np.sqrt((x[i] - y[j]) ** 2)

11 1 344.0 344.0 0.0 return distAn alternative is to use the kernprof package and @profile decorator, see examples here.

Visualization of the profiling results

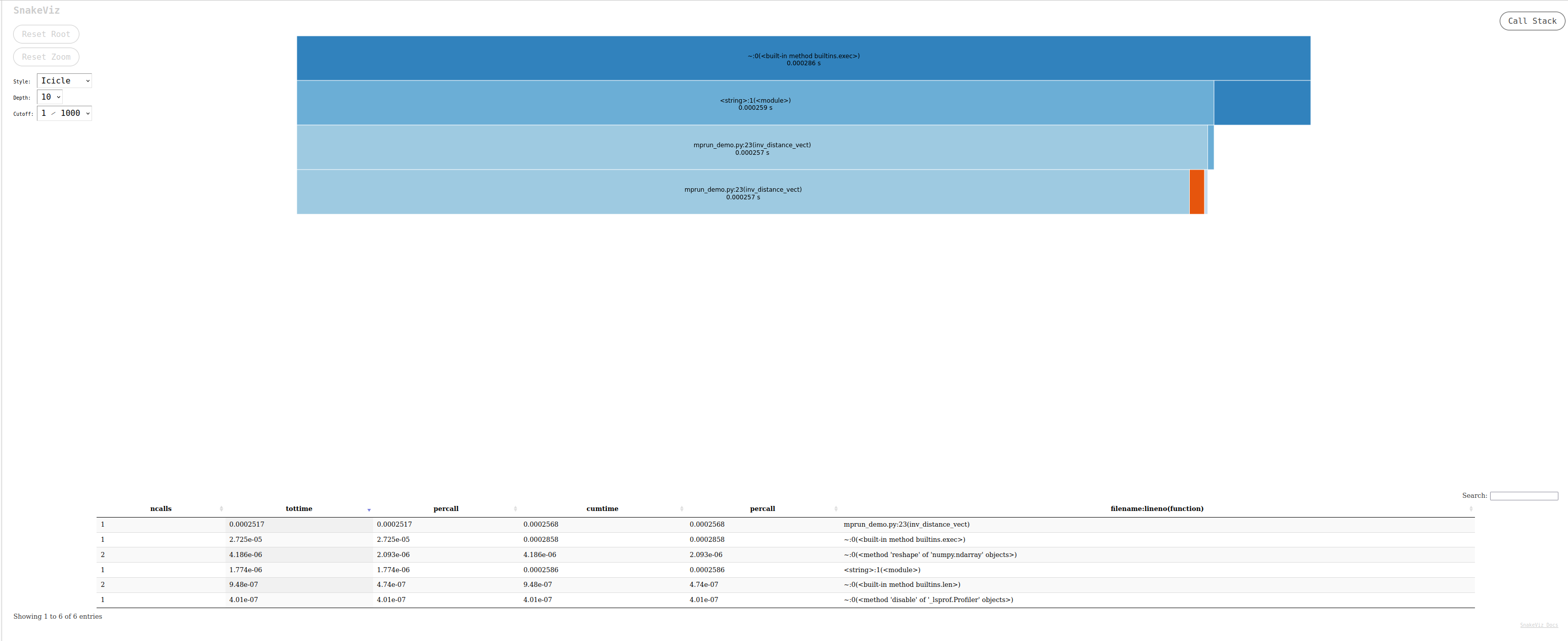

snakeviz: a browser-based graphical viewer for the output of Python’scProfile. Here is a video tutorial. Launch the commandsnakeviz program.prof

%load_ext snakeviz

%snakeviz -t inv_distance(x, y)

*** Profile stats marshalled to file '/tmp/tmpc08lm1de'.

Opening SnakeViz in a new tab...The output generated is an interactive file that can be investigated in a browser, looking like:

gprof2dota Python script to convert the output from many profilers (includingcProfile’s output) into a dot graph, with export in .svg. You can run it in your terminal with the following commands:default python -m cProfile -o output.pstats inv_distances.py gprof2dot.py -f pstats output.pstats | dot -Tsvg -o output.svgYou can then display the .svg file:`viztracer: a nice solution that provides html output with interactive visualization. This can lead to very large traces for non-trivial programs. In this case it is recommended to trim the shortest function calls from the trace file before attempting to load it in the visualizer (See filter options here).

py-spy: this package can be combined withspeedscopefor nice visualization.

Debugging with pdb

Let us use import pdb; pdb.set_trace() to enter a code and inspect it. Push the key c and then enter to go next.

A first recommendation is to use the python debugger in your IDE.

Pure python or IPython can use the pdb package and the command pdb.set_trace(). A command prompt launches when an error is met, and you can check the current status of the environment. Useful shortcuts are available (e.g., the c key or the j key, etc.); a full list is available here. For instance, you can quit the debugger with the command q or quit

def function_debile(x):

answer = 42

answer += x

return answerfunction_debile(12)def illustrate_pdb(x):

answer = 42

for i in range(10):

import pdb; pdb.set_trace()

answer += i

answer += x

return answerillustrate_pdb(12)A terminal is launched when a problem occurs, and one can then take over and see what’s going on.

get_ipython().run_line_magic('pdb', '')

# %pdbdef blobl_func(x):

answer = 0

for i in range(x, -1, -1):

print(i)

answer += 1 / i

return answer

blobl_func(4)References:

- Debugging Jupyter notebooks by David Hamann

- Advanced Python Debugging with pdb by Steven Kryskalla

- Debug Python with VSCode